Breaking out to improve cohesion (peer detection techniques)

Published by Manuel Rivero on 10/11/2025

1. Introduction.

In previous posts[1] we talked about the importance of distinguishing an object’s peers from its internals in order to write maintainable unit tests, and how the peer-stereotypes help us detect an object’s peers.

When we use the term object in this post, we are using the meaning from Growing Object-Oriented Software, Guided by Tests (GOOS) book:

Objects “have an identity, might change state over time, and model computational processes”. They should be distinguished from values which “model unchanging quantities or measurements” (value objects). An object can be an internal or a peer of another object.

This post presents three effective techniques for discovering values and object types, breaking out, budding off and bundling up; and then, goes deep into one of them: breaking out. We’ll cover the other two techniques in future posts.

2. Techniques to detect object and value types.

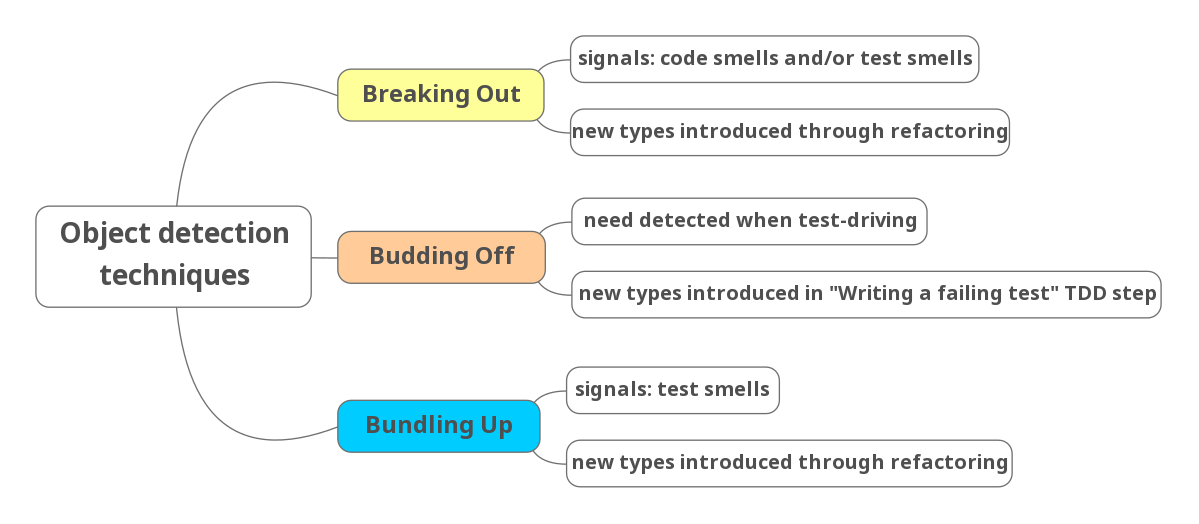

The GOOS book describes three techniques for discovering object types[2], some of which might be peers:

- Breaking out.

- Bundling up.

- Budding off.

These techniques identify recurring scenarios that indicate when introducing a new object improves a design.

We rely on both our ability to detect code smells, and on “listening to our tests” (using testability feedback to guide our design) to know when to apply a breaking out. The new object types are introduced through refactoring.

In the case of bundling up, we rely on “listening to our tests” to detect the need to apply it. Again the new object types are introduced through refactoring.

In contrast, we detect the need of applying a budding off by recognizing possible cohesion problems while writing a new failing test to drive new behaviour. In this technique we introduce the new object type from the test to prevent cohesion problems. Domain knowledge and previous experience are crucial to apply this technique well.

GOOS also describes three techniques for discovering value types that share the same names: breaking out, bundling up, and budding off. The mechanisms to introduce new values are similar to the ones described above for object types.

For values, bundling up is used to eliminate existing data clumps through refactoring, whereas budding off introduces new values from the outset to prevent primitive obsession or data clumps. Breaking out introduces new values by refactoring to improve cohesion.

In this post we focus on the breaking out technique.

3. Breaking out.

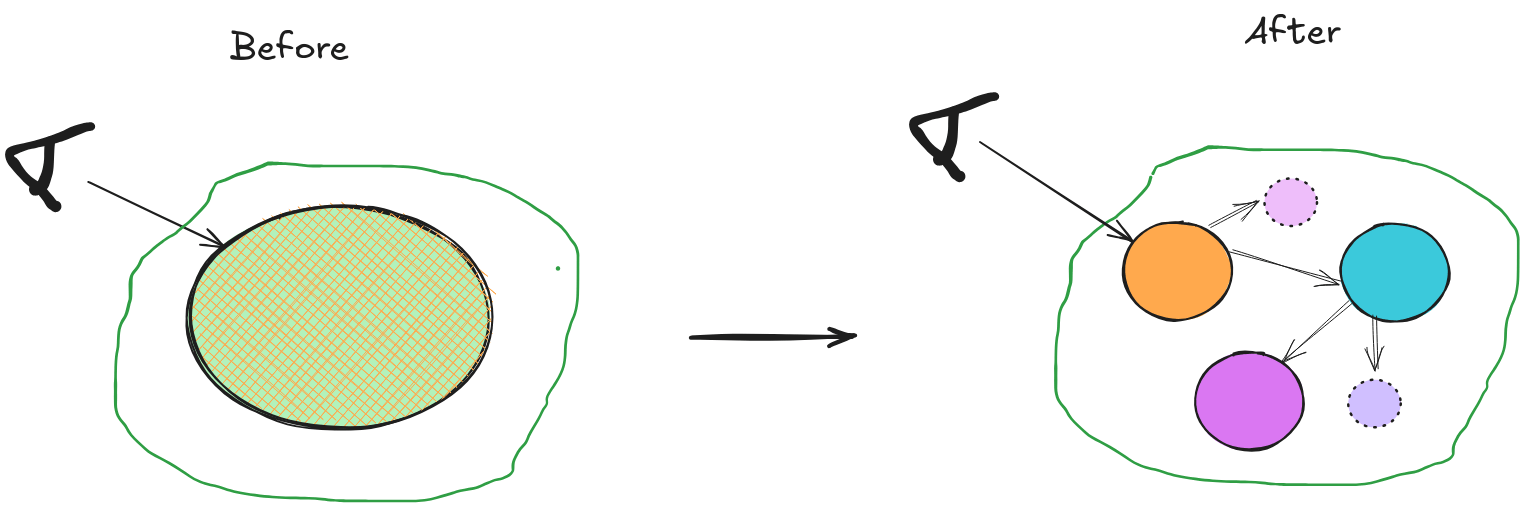

According to the GOOS authors, this technique consists in splitting a large object into a group of collaborating objects and/or values[4]. Let’s first describe the context in which we apply it.

3. 1. Context.

When starting a new area of code about which we don’t know much yet, we might temporarily suspend our design judgment for a while. We’d just test-drive the behaviour through the public interface of an object without imposing much structure. Doing this allows us time to learn more about the problem and its boundaries before committing to a given structure. We can see this decision as taking a deliberate, prudent technical debt[5].

There are different approaches to get this “room” for learning, such as (from more to less structure-imposing):

-

We might prefer to consider as peers only those behaviours that we can clearly match with a peer stereotype, (dependencies, notifications or adjustments).

-

We might consider as peers only the dependencies which are required to be able to write unit tests (FIRS violations). This approach would result in test boundaries similar to the ones that the classic style of TDD produces[6].

-

We might even decide to test-drive the behaviour using only integration tests[7]. In this “extreme” approach, we might end with no peers at all, although we’ll likely need to at least isolate the tests from dependencies that violate the Repeatable and Self-validating properties form FIRS[8].

3. 2. Signals that a breaking out is necessary.

After a short while, the object we are test-driving will become too complex to understand because of its poor cohesion[9]. We’ll likely observe code smells like Divergent Change, Large Class, Data Clump, Primitive Obsession, or Feature Envy, etc.

This lack of cohesion may also manifest as testability problems (test smells). The tests are verifying many behaviours at the same time, which means they have a big scope and a lack of focus. This can lead to difficulties in testing the object’s behaviour[10], or to test failures that become difficult to interpret. We’ll analyze these testability problems in more detail later.

Note that the impact of poor cohesion on testability may take longer to appear as test smells than as code smells in production code. Therefore, we should monitor the emergence of code smells, as they often serve as earlier indicators that a breaking out may be needed.

Once we detect a breaking out is needed, we should not wait long to apply it because the longer we defer it, the more friction we’ll face when adding new features and the more expensive applying the breaking out will become[11]. These effects correspond to the recurring and accruing interests of technical debt, respectively. GOOS authors express their concern that if we defer this cleanup due to time pressure, we may have to assume it in a moment where we “could least afford it”. This may make us feel forced to defer the refactoring even more, making the tech-debt snowball even bigger.

3. 3. Applying a breaking out.

We apply it by splitting a large object into smaller collaborating objects and values that each follow the Single Responsibility Principle[12]. This refactoring improves overall cohesion by producing a composite object made up of the original object and the extracted objects and values that it now coordinates. After the breaking out, the original object serves as a façade for the extracted new types and, as the entry point to the composite object, it remains the only object visible to the tests and the rest of the system.

3. 3. 1. What kind of objects were extracted?

Values are not objects by definition, so we don’t need to consider them. This focus the discussion on the object types introduced by the breaking out.

After a breaking out, only the original object is visible to the tests and the rest of the system. The new object types are only “seen” by the original object that owns them. Furthermore, we could inline them back into private methods of the original object without affecting the tests or any of its other clients. This means they are an internal detail of the original object, therefore, they are being treated as internals, not peers of the original object.

Some of those object types will remain as internals, whereas others may be “promoted” to peers. We explain the reasons that make us decide to promote an internal object to be a peer in our next post about breaking out.

3. 4. What about the tests?

After removing the cohesion problems from the production code, what should we do with the tests? They are still testing the behaviours of the original object and its collaborating objects and values through the interface of the original object. This means they are unfocused and likely large.

The newly extracted internal objects and values provide interfaces that let us test their behaviours independently. We could add more fine-grained and focused tests for them using those interfaces, but should we?

Not necessarily. We should only do it if it’s worth the effort. We may decide to defer testing the new types independently until it brings a clear benefit, or even decide not to test some of them if that doesn’t cause testability problems that are too painful.

Before deciding, we should take a closer look at the current tests. Let’s examine any testability problems we may find in them and see how they relate to the desirable test properties Kent Beck describes in his test desiderata.

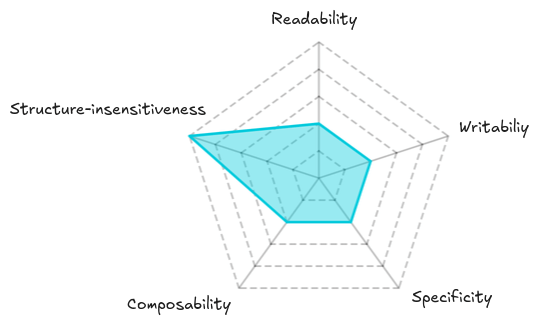

We will specifically examine the following properties: readability, writability, specificity, composability and structure-insensitivity[13].

3. 4. 1. Disadvantages.

- Poor readability and writability.

The size and lack of focus of the composite object’s tests in themselves can make them harder to understand, which causes poor readability.

In addition, the inputs and outputs we use in some of those tests might be very different from the ones used by some internal behaviour we’re trying to validate. This happens because the more distance from the entry point to the interface of the internal behaviour, the more likely it is that both input and output have been transformed by an intermediate behaviour. This difference in the inputs and outputs at both interfaces might make the test harder to understand, meaning an even poorer readability, and costlier to write (they take more effort), introducing writability problems.

- Poor specificity.

Another test smell we usually find in unfocused tests are test failures that become difficult to interpret. This means they may also have poor specificity.

- Poor composability.

When we compose two behaviours, and each behaviour can vary in multiple ways (e.g., different inputs, configurations, or states), the number of required test cases to exhaustively test all combinations is equal to the cardinality of the Cartesian product of their variations. As we compose more behaviors (or parameters), the total number of possible test cases grows multiplicatively, leading to a combinatorial explosion of test cases.

Composability is useful for avoiding this phenomenon. By composing different dimensions of variability that result from separating concerns, we can test each dimension separately and then have only a few tests that verify their combination. This allows the number of test cases to grow additively instead of multiplicatively, while still providing confidence that the tests are sufficient.

To test-drive complex behaviour, keeping a short feedback loop, we need to be able to break it into smaller increments of behaviour (different dimensions of variability). This ability is known as behavioural composition. Test-driving a complex behaviour through the composite object’s interface might make it difficult to apply behavioural composition, thereby impairing our ability to apply TDD[14].

If we face difficulties in composing behaviours, it means that the original tests have low composability.

3. 4. 2. One advantage.

- High structure-insensitivity.

Not all it’s bad about the original tests because not knowing anything about the internal details of the original object gives them a high structure-insensitivity which is advantageous to reduce refactoring costs.

In our next post we’ll use this analysis of test properties to identify the trade-offs involved in deciding whether we should refactor the tests now or later.

4. Conclusions.

In this post, we explained in depth the breaking out technique from Growing Object-Oriented Software, Guided by Tests (GOOS) belongs to a family of techniques (breaking out, budding off, and Bundling up) that helps discover both object and value types.

Breaking out is useful to fix the cohesion problems that may arise because of delaying design decisions until we learn more about the domain. This approach may prove useful when facing volatile and poorly understood domains. A breaking out becomes the way to pay that conscious technical debt. We also described how to recognize the need to apply it, using code smells and, in more severe cases, testability problems as indicators of poor cohesion.

Applying a breaking out splits the initial object into smaller collaborating internal objects and values. The new objects created through this refactoring are treated as internals rather than peers since they remain invisible to the tests and other system parts. After this refactoring, the original tests still have poor readability, writability, specificity, and composability. Despite these disadvantages, such tests have high structure-insensitivity, meaning they are resilient to refactorings affecting the interfaces of the new types.

Our next post will focus on when and how to refactor the original tests. It will be through that refactoring that new peers may appear in our design.

Thanks for coming to the end of this post. We hope that what we explain here will be useful to you.

The TDD, test doubles and object-oriented design series.

This post is part of a series about TDD, test doubles and object-oriented design:

-

The class is not the unit in the London school style of TDD.

-

“Isolated” test means something very different to different people!.

-

Heuristics to determine unit boundaries: object peer stereotypes, detecting effects and FIRS-ness.

-

Breaking out to improve cohesion (peer detection techniques).

-

Refactoring the tests after a “Breaking Out” (peer detection techniques).

-

Bundling up to reduce coupling and complexity (peer detection techniques).

Acknowledgements.

I’d like to thank Emmanuel Valverde Ramos, Fran Reyes, Marabesi Matheus and Antonio de la Torre for giving me feedback about several drafts of this post.

Finally, I’d also like to thank icon0 for the photo.

References.

-

Growing Object Oriented Software, Guided by Tests, Steve Freeman and Nat Pryce.

-

Thinking in Bets: Making Smarter Decisions When You Don’t Have All the Facts, Anne Duke

-

Test-Driven Design Using Mocks And Tests To Design Role-Based Objects, Isaiah Perumalla.

-

Mock roles, not objects, Steve Freeman, Nat Pryce, Tim Mackinnon and Joe Walnes.

-

Mock Roles Not Object States talk, Steve Freeman and Nat Pryce.

-

Object Collaboration Stereotypes, Steve Freeman and Nat Pryce.

-

The class is not the unit in the London school style of TDD, Manuel Rivero

-

“Isolated” test means something very different to different people!, Manuel Rivero

-

Heuristics to determine unit boundaries: object peer stereotypes, detecting effects and FIRS-ness, Manuel Rivero

Notes.

[1] These are the posts mentioned in the introduction:

-

The class is not the unit in the London school style of TDD in which we commented, among other things, the importance of distinguishing an object’s peers from its internals in order to write maintainable unit tests.

-

Heuristics to determine unit boundaries: object peer stereotypes, detecting effects and FIRS-ness in which we explained how the peer stereotypes helped us identify an object’s peers.

[2] In a section titled Where Do Objects Come From?. We mention the section because we think that its title is important to understand the context of these techniques. It is located in chapter 7, Achieving Object-Oriented Design.

[3] This mind map comes from a talk which was part of the Mentoring Program in Technical Practices we taught last year in AIDA.

[4] The techniques for value types are explained in the section Value Types and the ones for object types are explained in subsection Breaking Out: Splitting a Large Object into a Group of Collaborating Objects from section, Where Do Objects Come From?. Both sections are in chapter 7 of GOOS book: Achieving Object-Oriented Design.

[5] See Martin Fowler’s post Technical Debt Quadrant.

[6] We talked about how this approach produces boundaries similar to the ones obtained applying the classic style of TDD in our post Heuristics to determine unit boundaries: object peer stereotypes, detecting effects and FIRS-ness.

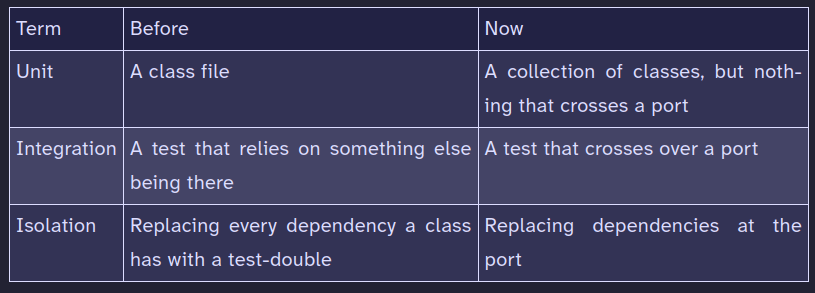

Steve Fenton’s posts My unit testing epiphany and My unit testing epiphany continued describe this approach well. His epiphany consisted in realizing the class is not the unit. His explanation is influenced by Ian Cooper’s 2013 influential talk TDD, Where Did It All Go Wrong and uses hexagonal architecture’s port concept. The following table expresses how he understood some concepts before his epiphany and how he understands them after it:

[7] In this discussion we consider integration tests as tests that check whether different units work together as expected, but remember that we don’t consider the class as the unit. Integration tests can, and usually, involve external systems, the ones you leave out to get isolated and faster unit tests (database, file, shared memory, etc.).

[8] We need integration tests to at least be Repeatable to avoid flakiness, and Self-validating to enable test automation. Have a look at the discussion about integration tests in our post “Isolated” test means something very different to different people!.

[9] This time might be shorter than we think. In the paper When and Why Your Code Starts to Smell Bad Tufano et al surprisingly found that the changes that were the root cause of cases of the code smell Blob class (a.k.a. Large Class) were introduced already upon the creation of those classes, and not due to their evolution with time as is widely thought. In any case, the more we wait to fix cohesion problems, the harder it will get to fix them.

[10] This code is an example of unfocused tests that are becoming too large to validate the object’s behaviour easily (it was used in a mentoring program we did last year in AIDA).

Only a couple of the test cases in UserAccountCreationTest serve to check the behaviour of UserAccountCreation. Most of them are actually testing the logic to validate the user’s document id. Since we still need to test-drive other behaviours to validate other parts of the user data, those tests will grow even larger and more unfocused. That’s why we think we should refactor these tests before we keep on developing.

It’s kind of difficult to create synthetic examples of tests that express this problem well. A real example might likely be much larger and complex than the example we showed you here. We hope the message, somehow, gets across.

[11] If the lack of cohesion has gone too far, reading Split Up God Class from Object-Oriented Reengineering Patterns, chapter 20 of Working Effectively with Legacy Code or the Splinter pattern from Software Design X-Rays might be helpful. You may also have a look at an interesting documented example of refactoring a large class: Refactoring: This class is too large.

These techniques won’t be necessary if we don’t defer the breaking out too much.

[12] We may get that splitting of responsibilities applying refactorings, such as, Extract Class, Replace Conditional Logic With Strategy, etc.

[13] There are many other desirable test properties in Beck’s test desiderata, but we considered that readability, writability, specificity, composability and structure-insensitivity were the most relevant to discuss the trade-offs involved in a breaking out.

[14] We think that composability is one of the test properties that have more impact on our ability to do TDD in short feedback loops (small behaviour increments). Sometimes the only way to be able to apply behavioural composition is to introduce a separation of concerns in the production code.